How Does Your Website Pages Get Ranked in Google Search

I know most of you would be thinking – how Google Search works and how website pages get ranked in search results?

Well, we all are very well aware of the significance of Search Engine Optimization(SEO) for a website.

Everyone wishes to get their website posts listed in top 10 results of search engines in order to take advantage of grabbing huge visitors!

So, today I am going to talk about web crawlers, also known as spiders, bots, and robots that are responsible for ranking your pages in searches.

For a better understanding of a web crawler, let’s take a quick look at –

Table of Contents

How Google Search Works?

Ever wondered how search engines instantly answers to your every query so quickly? I’m pretty sure you did. 😉

When you sit down at your computer and do a search, Google server performs 3 major processes to discover, crawl and serve a web page –

- Crawling

- Indexing

- Serving

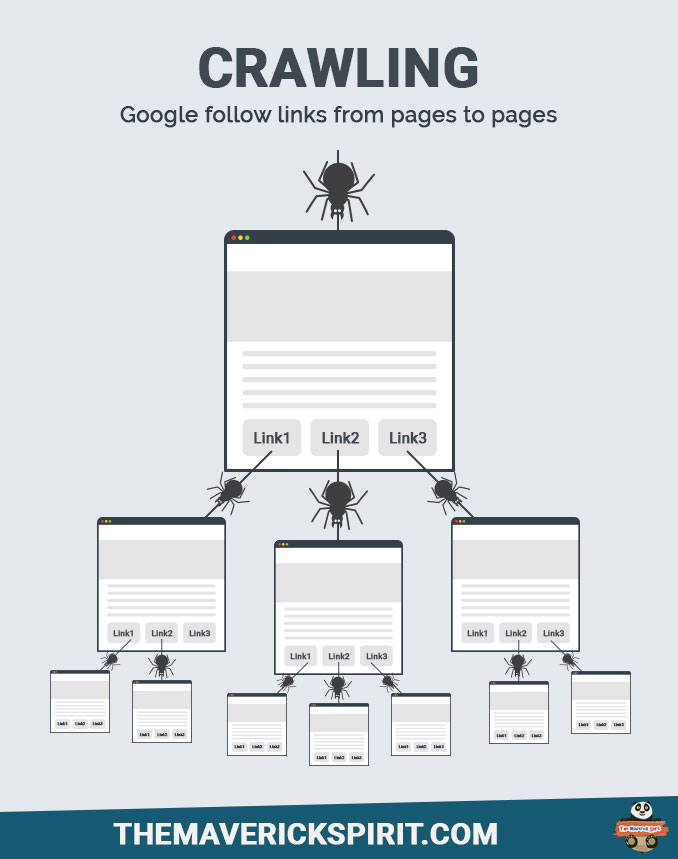

1. Crawling

Crawling is the process done by web crawlers for finding and retrieving new and updated pages on www (World Wide Web).

Google use web crawlers to traverse the web for new pages. These pages then update the Google index.

Google follows links from pages to pages and sort them according to the –

- Page and site quality,

- Content freshness,

- Page rank,

- Domain authority,

- Outbound link quality,

- Safe search,

- User context,

- Translation,

- Universal search,

- Fighting spam to keep your result relevant.

So basically, web crawlers is a software program which finds and retrieve the new pages and updates the google index.

An algorithm designed for web crawlers is used to decide which websites to crawl and how many pages from a website to fetch.

Googlebot begins its crawling from –

– The URL’s stored in the Google’s database fetched from the last crawl process and sitemap of a site.

– Then, it visits each and every URL of the database.

– Add links to the database found on these pages to crawl further.

Note You can give instructions to Googlebot or spiders on how to crawl your website by maintaining Robots.txt file.

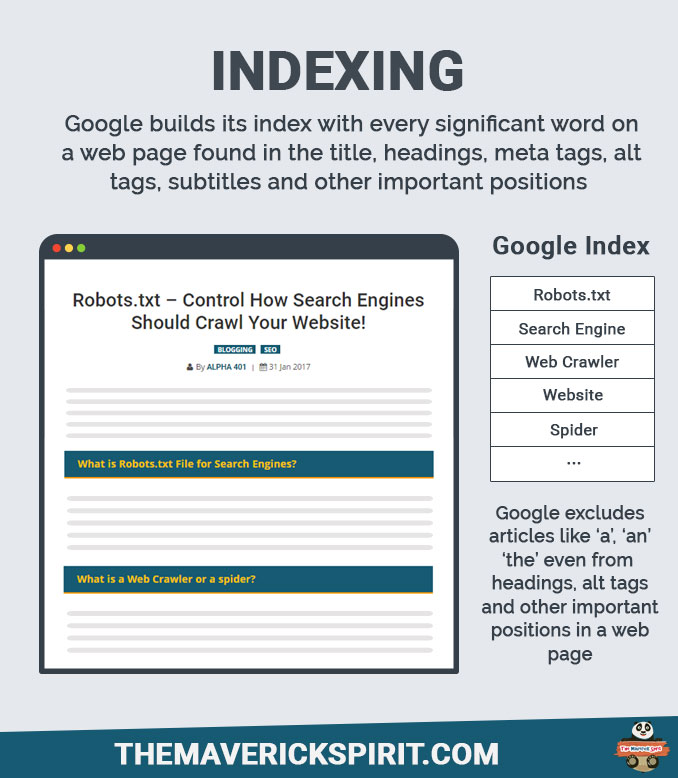

2. Indexing

Now, once crawling gets completed there are hundreds of thousands of possible results for your search term!

How does google decide which document we really want?

Ultimately, before Googlebot indexes a page,

It looks or you can say, asks questions to decide which pages to index like –

- How many times does this page contain a keyword?

- Do the words appear in the title and in URL?

- Does the page include synonyms for search terms?

- Is this page from a quality website or is it low quality even spamming?

- What is the page’s PageRank? That’s the formula which rates the page’s importance by looking at how many outside links point to it & how important those links are?

Finally, after indexing all the pages based on above criteria they are combined and send back to your search result.

This was for Google! Every search engine follows different approach for indexing.

3. Serving

When a user searches anything, google serve the results which google thinks are relevant to the user’s query.

In order to understand user’s query in a better way, various Google search algorithms and methods are written like –

- Autocomplete,

- Spelling,

- Synonyms,

- Query understanding,

- Google instant and,

- Much more.

Google determines the relevancy by over 200 factors out of which one is PageRank.

PageRank works by counting the number and quality of links to a page to determine a rough estimate of how important the website is. The underlying assumption is that more important websites are likely to receive more links from other websites

By Wikipedia

Of course, spam links are not counted and google consider only quality links for better user experience.

Factors For Google Ranking

SEO ranking factors have changed dramatically during the past years. Here, I would like to specify some important factors responsible for better search engine rankings –

1. Site & Page Quality

When Site or Page quality is mentioned it directly refers to your website content, appearance, functionality, usability and SEO factors.

Always keep in mind – Audience is looking for an answer to their problem so help them by providing informative and relevant content. In this way, you can increase their confidence and alternatively in Google’s eye.

2. SafeSearch

You might be wondering what does Google safe search do?

Well, it acts as a filter and screen sites with content like adult webpages, images, videos and removes them from search results.

3. User Context

User context is something that revolves around a user’s observation or interest or awareness.

This ends up in providing more relevant results based on geographic region, web history, and much more.

4. Translation & Internationalization

Filters out results based on user’s language and country.

5. Universal Search

You might have noticed a separate box at the top of your search results featuring additional information.

This information is produced by blending vertical search results for content, such as images, news, maps, videos, and moreover, your personal content too.

Conclusion

If you want your site to rank well in search results, make sure Google is crawling and indexing your site in a way you want it to.

What measures do you take to get your pages indexed well in Google Searches?

Lots of great info here!

I think nowadays Google is giving chance to new sites to rank higher irrespective of any authority.

Thanks, Jessica!

It does seem to be more flexible than before.

Thanks for this info-packed post. I didn’t know much about that…

Nice and simply explained. I recently started using the Yoast Plugin so I can make sure I have enough keywords in the right places in each of my blog posts to improve the indexing of my site.

Almost every blogger or website owner wishes to be on top in google search engine results. It needs advanced skills to do SEO of your website to achieve high ranking and to learn that how the search engine really works out when a search is made on it.